Design Patterns For Building Trust

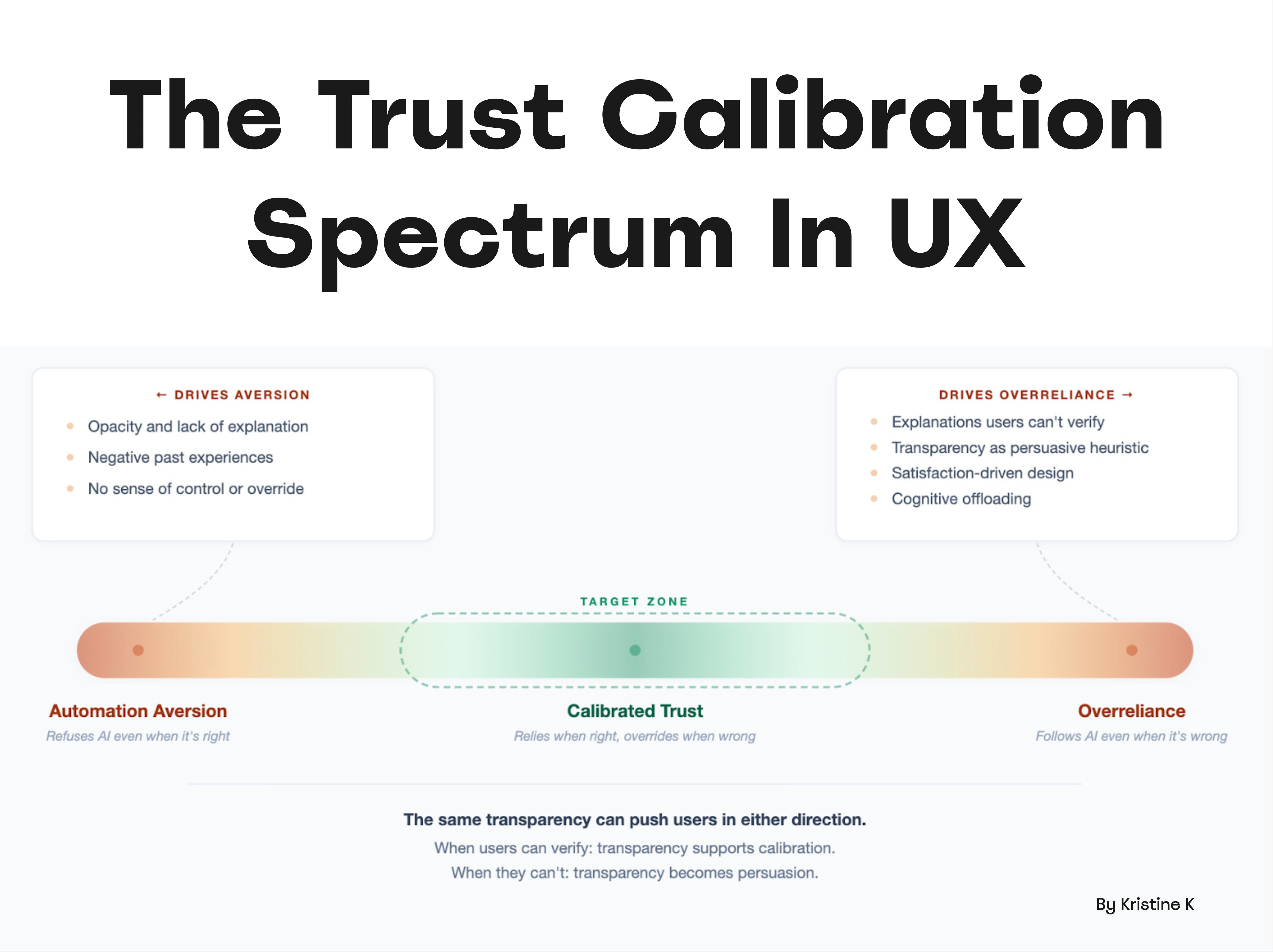

We often assume the safest way to build user trust is through transparency. But with AI products, the reality is far more nuanced — and the goal isn't maximum trust, it's calibrated trust.

We often assume that the safest way to build user trust is through transparency. We show users what happens, we explain the rationale behind decisions, we break down outcomes into step-by-step instructions that make sense. That's how we make products feel more reliable and trustworthy.

However, the reality is far more nuanced, particularly with AI products. As Kristine K beautifully writes, "trust in AI sits on a spectrum." The goal, then, shouldn't be to push users toward maximum trust.

We want to reach calibrated trust: people relying on AI when it's right and overriding it when it's wrong.

Trust is a spectrum: from aversion to calibrated trust to overreliance. Reliable products hit the target zone in the middle. Large view.

Aversion vs. Overreliance #

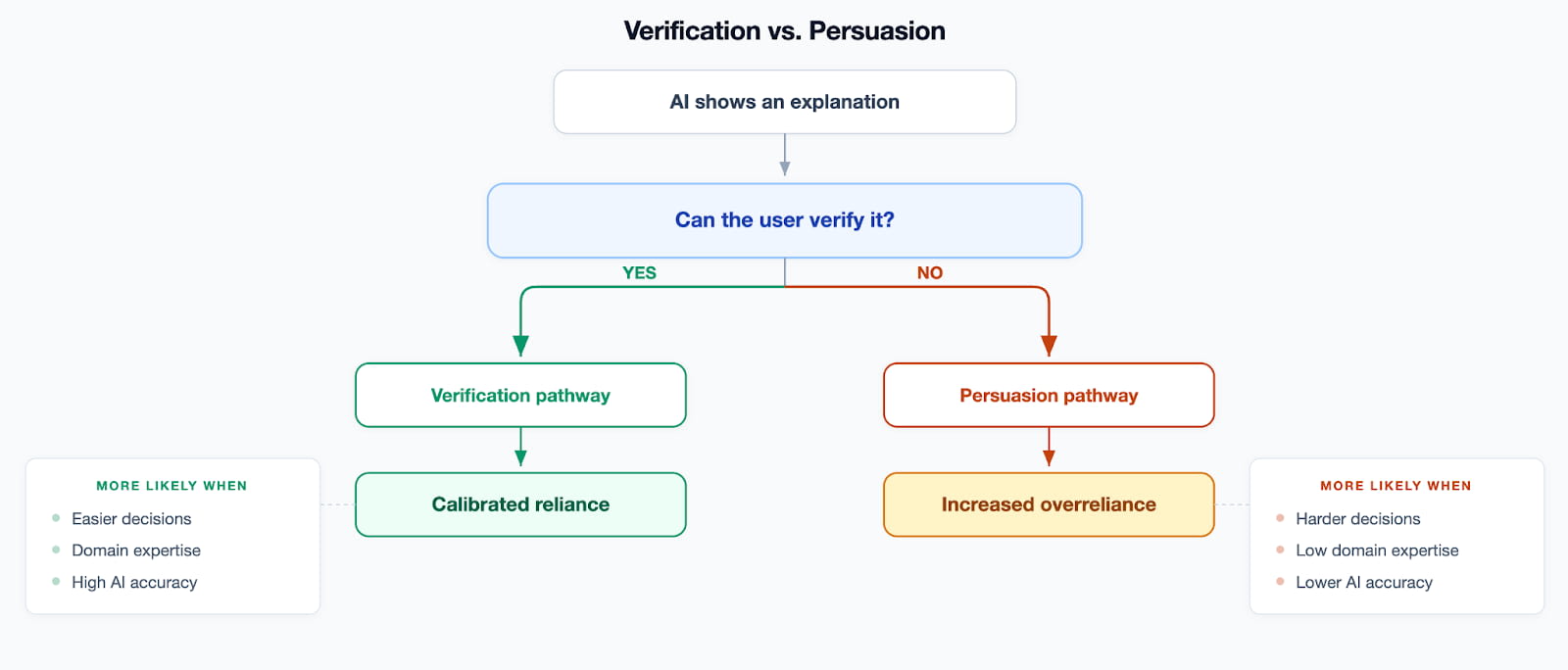

In UX, our goal is typically to prevent both aversion and overreliance. The critical part isn't really transparency, but rather if users can actually verify a system's output reliably. This becomes a particular challenge with AI products.

Users sometimes refuse to engage with AI even when it would be genuinely beneficial, leading to aversion. Others follow AI recommendations on auto-pilot, even when those recommendations are flawed, resulting in overreliance.

Verification vs. Persuasion: the critical part isn't transparency, but if users can actually verify output reliably. Large view.

In other words, trust comes from "calibrating reliance" — by making decisions easier to verify and (this part is often forgotten) matching the user's domain expertise.

Aversion often emerges due to several factors:

- Opacity and lack of explanation

- Negative past experiences that erode confidence

- No sense of control

- No ability to override decisions

Overreliance often emerges with:

- Explanations that users can't verify

- Transparency used just to persuade users, not as insight

- Satisfaction-driven UX when positive feedback is favored over critical evaluation

- Cognitive offloading, when users delegate thinking to AI

Goal: Calibrated Trust #

The ideal state is not maximum trust, but calibrated trust. That's when users rely on the product when it works correctly, and override it when it hallucinates. As it turns out, the very same transparency mechanism can push users in either extreme.

When users can effectively verify output, transparency supports calibration. However, if they cannot verify, transparency becomes a tool for persuasion, leading to auto-acceptance.

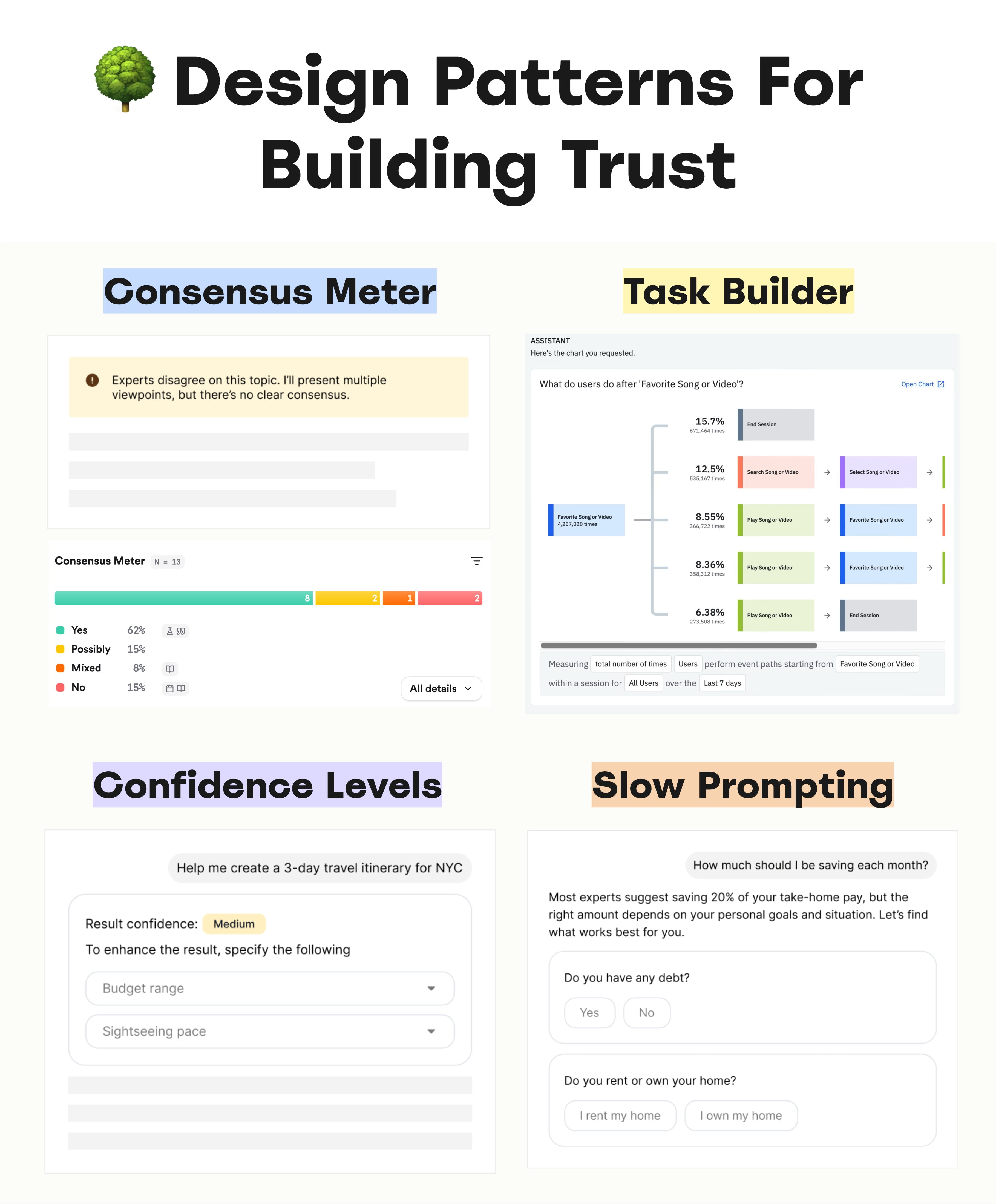

Design patterns for building trust: consensus meter, task builder, slow prompting. Large view.

According to Anyi Sun, there are 5 psychological foundations of user trust:

-

Reliability 🔰 The degree to which the product consistently behaves as expected. It's a sense that the product is dependable — based on a track record of past actions. Reliability comes from promising what you do, and doing what you promised.

-

Technical Competence ⚡ Perceived intelligence, sophistication and capability of the product. It's the user's belief that the product can successfully perform what it is being trusted to do. It's about trusting a product's capability.

-

Understandability 🧠 The extent to which users feel they can understand how the system works or why it made a certain decision. The product must be able to articulate how a decision came along, with references to fragments that underpin that decision.

-

Faith and Care 🌱 Emotional, almost "blind trust" in the product, especially when users don't understand the underlying logic. It's a belief that the trusted party actually cares about the positive outcome for you, and intends to do good.

-

Personal Attachment 🌳 A sense of rapport, connection or emotional engagement with the product. Typically it emerges when a user feels that they get meaningful value from the product, and from interactions with the people supporting it.

Design Patterns For Building Trust #

With AI, hitting all these psychological foundations is extremely hard. Some people trust AI almost instinctively; others are more critical. But people's attitudes often change dramatically once they realize they've made severe mistakes because of AI. Recovering from that is very hard.

Design patterns for building trust, a wonderful catalog of design patterns by IF. Large view.

We can help with some useful design patterns:

- Don't "Ask me anything" → push for scoping and constraints

- Slow down prompting → request specific details

- Present many viewpoints, explain that experts disagree

- Highlight what is AI and what isn't (AI disclosure)

- Allow users to override AI suggestions manually

- Allow users to tweak AI output and refine it

- Adapt AI's tone depending on the severity of the user's task

Trust is why people stay or leave. It builds long-term loyalty and helps users overcome hesitation. But it must be designed and retained — across all psychological foundations and with thoughtful UX work.

Note on Confidence Scores #

One of the common challenges is the issue with confidence scores. Often they are presented simply as a number without any meaningful context. But a 37% confidence level rarely offers actionable insights, and an 89% score doesn't clarify what's actually missing for reliability.

As a result, users might not act altogether, or they proceed without a clear understanding of the consequences. And due to lack of knowledge — because AI often sounds authoritative and logical — people tend to trust it even when they cannot verify its claims.

This can eventually lead to significant mistakes, causing the product to be perceived as incredibly unreliable, poorly designed, and neither useful nor helpful for the tasks at hand.

Wrapping Up #

Our goal is to calibrate the level of transparency. It should match the user's expertise, their existing knowledge, and their decision-making process. Simply showing more of an AI's internal "thinking" can sometimes be helpful, but it can also be harmful.

And: it's also about the right dose of transparency — just enough for people to be able to verify and catch errors when needed.

Useful Resources #

- From Trust to Surrender in One Click, by Kristine K

- 5 Psychological Foundations of User Trust in AI, by Anyi Sun

- Data Cards Playbook, by Google Research

- Design Patterns for Data Transparency, by Projects by IF